Table of Contents

Notes on Michael Nielsen

Timeline

1960 Frank Rosenblatt invents the Perceptron neuron as a hardware device.

Artificial Neurons

Two types of artificial neuron: the perceptron and the sigmoid neuron.

Perceptron

The word perceptron is used in two ways. 1) It identifies a type of neuron, the perceptron neuron. 2) It identifies a network of perceptron neurons, sometimes called a multi-layer perceptron.

Both were invented by Frank Rosenblatt in the 1950s and 60s. The first perceptron was a hardware device. Frank was a neurologist and he modeled the perceptron on the first human neuron.

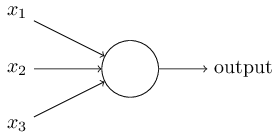

Multiple inputs: x₁, x₂, x₃

One output, boolean (1 or 0).

Inside the perceptron is a function, using weights and a threshhold.

One weight for each input: w₁, w₂, w₃

Multiply each weight times its input and sum them together.

weighted sum of three inputs = x₁w₁ + x₂w₂ + x₃w₃

For any number of inputs j, weighted sum = ∑ⱼxⱼwⱼ

Compare the sum of weighted inputs to the threshhold to determine the output.

output = 0 if ∑ⱼxⱼwⱼ ≤ threshhold

output = 1 if ∑ⱼxⱼwⱼ > threshhold

Using the language of matrix math, express the inputs and weights as vectors X and W, and the weighted sum as the dot product.

∑ⱼxⱼwⱼ = X ⋅ W

Also, instead of comparing to threshhold, change the name to bias and compare to 0.

output = 0 if X ⋅ W + b ≤ 0

output = 1 if X ⋅ W + b > 0

ƒ(x) = 1 if X ⋅ W + b > 0, else 0

Now tune the weights and bias until you get the results you want. A higher bias makes it harder to get an output=1, and a lower bias makes it easier to get an output=1. Use this [perceptron 1 spreadsheet](https://docs.google.com/a/hagstrand.com/spreadsheets/d/1EbkBAdOLGc13XdM4OT9vblJEh6Jq-THXIg9CwgrDZJA/edit?usp=sharing) to experiment.

Network of Perceptrons

perceptronnetwork.png |Perceptron Network

Sigmoid Neuron

//Sigmoid// == //Logistic//

Just like a perceptron, the sigmoid neuron has inputs, x1,x2,…. But instead of being just 0 or 1, these inputs can also take on any values between 0 and 1. So, for instance, 0.638… is a valid input for a sigmoid neuron. Also just like a perceptron, the sigmoid neuron has weights for each input, w1,w2,…, and an overall bias, b. But the output is not 0 or 1. Instead, it's σ(w⋅x+b), where σ is called the sigmoid function.

Incidentally, σ is sometimes called the logistic function, and this new class of neurons called logistic neurons. It's useful to remember this terminology, since these terms are used by many people working with neural nets. However, we'll stick with the sigmoid terminology., and is defined by:

σ(z) ≡ 1/1+e−z or σ(z) ≡ 1/1+exp(−∑jwjxj−b)

Sigmoid means resembling the greek letter sigma ς or the latin letter S.

Note: the greek letter sigma, uppercase Σ, lowercase σ or ς.

References

Michael A. Nielsen, Neural Networks and Deep Learning, Determination Press, 2015, http://neuralnetworksanddeeplearning.com/

Contents

- What this book is about

- On the exercises and problems

- Using neural nets to recognize handwritten digits

- How the backpropagation algorithm works

- Improving the way neural networks learn

- A visual proof that neural nets can compute any function

- Why are deep neural networks hard to train?

- Deep learning

- Appendix: Is there a simple algorithm for intelligence?

- Acknowledgements

- Frequently Asked Questions